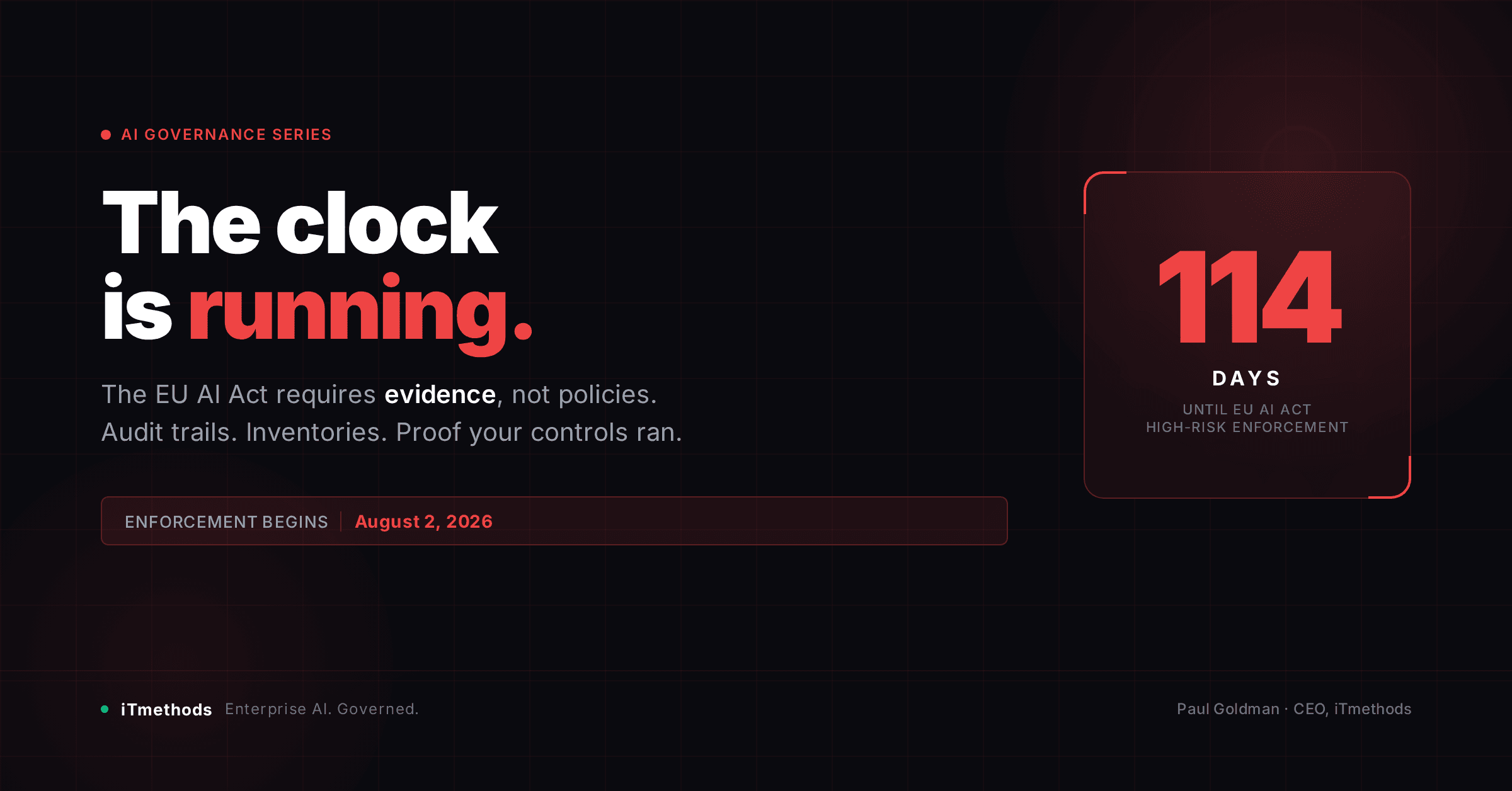

114 Days

The EU AI Act enforcement clock is running. Most enterprises don't know what they need to prove. And a compliance deck won't save them.

Securing the Agentic Era. Article 4

August 2, 2026 is not a distant deadline. It is 114 days from today. That is when the EU AI Act becomes fully enforceable for high-risk AI systems. The category that covers AI used in employment, credit, healthcare, critical infrastructure, law enforcement, and education.

If your organization operates in Europe, serves European customers, or processes data about European citizens, this regulation applies to you. And the question regulators will ask is not “do you have an AI policy?” It is: “Can you prove your controls ran?”

That distinction (between having policies and having evidence) is where most enterprise AI programs are going to fail.

What the EU AI Act Actually Requires

The regulation establishes a tiered risk framework. Unacceptable-risk systems are banned outright. High-risk systems. The category most enterprises are building toward. Face the most demanding requirements: conformity assessments, technical documentation, human oversight mechanisms, accuracy and robustness standards, and continuous monitoring with logging obligations.

The logging requirements are worth dwelling on. High-risk AI systems must be designed to automatically log events throughout their operational lifetime. The logs must be kept for a defined period. They must be available to national authorities on request.

This is not a GDPR-style “we have a privacy policy” compliance posture. This is operational infrastructure. The evidence has to exist, has to be accurate, and has to be retrievable. At the moment an auditor asks, not six weeks later after a manual collection exercise.

Most enterprise AI deployments are not built this way. They were built to work. The audit trail was an afterthought, if it exists at all.

The Evidence Gap

When I talk to CISOs and CTOs preparing for EU AI Act compliance, the conversation follows a predictable pattern.

Step one: the AI inventory. Most organizations know they need one. Many have started building it. Few have finished, because the inventory problem is harder than it looks. AI is embedded in SaaS tools, in developer workflows, in third-party vendors, in models deployed by business units that never told IT. A spreadsheet is not an inventory. An inventory is a living, auditable record that captures every AI system, its purpose, its risk classification, and its governance status.

Step two: risk classification. This is where most compliance programs stall. The EU AI Act’s definition of “high-risk” is specific but requires judgment to apply. Most legal teams can classify the obvious cases. The edge cases. An AI agent that assists with hiring decisions, an AI system that scores customer creditworthiness indirectly. Require technical and legal expertise working together, and documented rationale for every decision.

Step three: audit trails. This is the infrastructure problem. For high-risk systems, you need to prove that your human oversight controls ran, that your accuracy thresholds were maintained, that your system behaved consistently with its conformity assessment. That proof has to come from logs. Automated, tamper-resistant, and structured for retrieval. Most enterprise AI deployments generate logs. Almost none generate audit-ready evidence.

Step four: vendor due diligence. You are responsible for the AI systems in your stack, including the ones you didn’t build. Your foundation model provider, your AI platform vendor, your SaaS tools with embedded AI. All of them are part of your compliance posture. The regulation requires documented third-party assessments. “We use a reputable vendor” is not sufficient.

Why This Is an Infrastructure Problem, Not a Legal Problem

The temptation is to treat EU AI Act compliance as a legal exercise: update the policies, add the disclosures, file the documentation, and move on. That approach will fail for the same reason that every other compliance-as-paperwork approach eventually fails. It does not survive contact with an actual audit.

Regulators under the EU AI Act have the authority to request system documentation, audit logs, and evidence of ongoing monitoring. If your compliance posture is built on policies and periodic assessments rather than automated evidence collection, you will not be able to produce what is asked for, on the timeline that is required, at the level of detail that is expected.

The organizations that are going to be ready in August are the ones that treated this as an infrastructure problem from the start. They built the governance layer into their AI deployments. Automated logging, policy enforcement at the system level, continuous monitoring with evidence collection baked in. Rather than trying to reconstruct it after the fact.

This is what Reign is built to do: policy-as-code enforcement, automated audit trails, and regulatory evidence collection across every AI tool in your stack. Not as a compliance bolt-on, but as operational infrastructure. The evidence is generated automatically because the governance layer runs continuously. When an auditor asks, the answer is ready.

What to Do in the Next 114 Days

If you are reading this and your EU AI Act program is still primarily a legal workstream, here is a practical framework for the next three months.

Days 1–30: Inventory and classify. Complete your AI system inventory. Every system, every vendor, every embedded use. Classify each against the EU AI Act risk tiers with documented rationale. Identify your high-risk systems. These are your compliance priority.

Days 31–60: Assess your evidence posture. For each high-risk system, audit your current logging and monitoring. What evidence do you have today that your controls ran? What would you need to produce for an auditor? The gap between what you have and what you need is your remediation list.

Days 61–90: Build or deploy the evidence infrastructure. Close the gap. This means automated audit logging, policy enforcement monitoring, and a retrieval mechanism that lets you produce evidence on demand. For most organizations, building this in-house is not realistic in 90 days. The question is which platform provides it.

Days 91–114: Test and validate. Run a mock audit. Have someone outside your compliance team request the evidence your highest-risk systems would need to produce. Identify what’s missing. Fix it before August.

The Deadline Is Real

The EU AI Act has been in force since August 2024. The grace period for high-risk systems ends August 2, 2026. The enforcement mechanism includes national market surveillance authorities, penalties of up to 3% of global annual turnover for violations, and reputational exposure that goes beyond the fine.

114 days is enough time to get ready. If you start treating it as an infrastructure problem today.

The organizations waiting for legal to finish the policy deck are not going to be ready.

Automated audit trails, policy-as-code enforcement, and regulatory evidence collection. Article-by-article automation map for high-risk AI obligations.

Paul Goldman is CEO of iTmethods and architect of Reign and Forge. The Trust Layer for Enterprise AI. He writes about AI governance, enterprise AI infrastructure, and what regulated industries need to build safely in the agentic era.

Previously in this series: The AI-Native Stack · Self-Hosted Agents · The Platform Engineering Pivot

Continue the AI Governance series

← Previous

Chamath Just Said What Every Enterprise CISO Already Knows

Why data sovereignty, AI cost control, and attorney-client privilege demand governed infrastructure

Next →

Reign and Forge for AI Agents: Governed by Design

Coming Soon

Or share your thoughts here

Your comment will appear on this page. The best insights may be shared in the LinkedIn discussion.

Get Paul’s next article before it publishes

Join 500+ security leaders