The AI-Native Stack: What It Actually Looks Like

Three layers separate the companies building with AI from the companies being disrupted by it

Every enterprise says they're adopting AI. Almost none have rearchitected for it.

I talk to CTOs and platform engineering leaders every week. The pattern is the same: they've rolled out Copilot or Cursor to a few teams, maybe stood up a ChatGPT Enterprise license, perhaps approved Claude for engineering. They call it "AI adoption." Their board decks show growing AI spend. The innovation team has demos.

But when I ask three questions, the conversation shifts.

Can you tell me which AI agents have access to your production systems right now? Can you show me an audit trail of what those agents did last week? And can your platform team deploy a new AI capability to 500 developers by Friday?

The silence tells me everything. They've adopted AI tools. They haven't built an AI-native stack.

The Three Layers

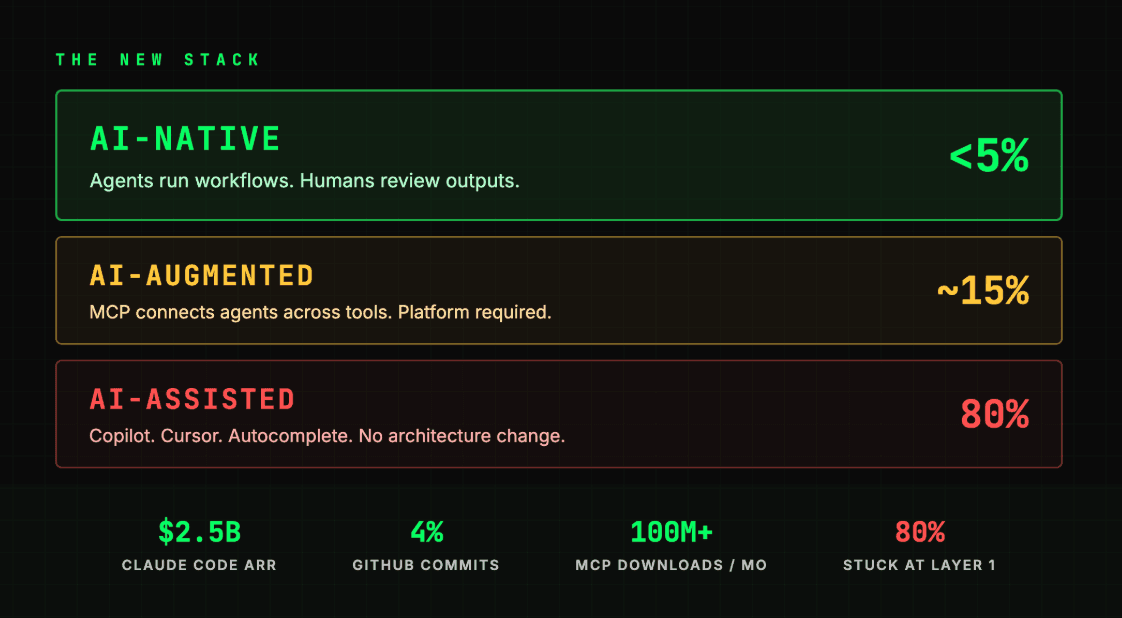

After working with enterprise engineering organizations for 21 years, I see three distinct maturity levels in how teams integrate AI into their development stack. Most are stuck at the first one.

Layer 1. AI-Assisted

This is where 80% of enterprises live today. Individual developers use AI coding assistants, Copilot, Cursor, Claude Code, to write code faster. The tools sit on top of the existing stack. Nothing changes architecturally. The developer is still the decision-maker, the agent is a sophisticated autocomplete.

Productivity gains are real but modest. Studies suggest 20-30% improvement in code generation speed. The ceiling is low because the AI operates in isolation. It can write a function, but it can't query your monitoring stack, check deployment status, or understand the business context of what it's building.

Layer 2. AI-Augmented

This is where the leading 15-20% of organizations are arriving now. AI agents don't just write code. They participate in workflows. They connect to external systems through protocols like MCP, which has crossed 100 million monthly SDK downloads since Anthropic released it in late 2024.

At this layer, an engineer can ask an agent: "Why did error rates spike on the payments service?" and the agent queries Datadog, pulls recent GitHub deployments, checks Kubernetes pod health, and synthesizes an answer from all three sources. No dashboard switching. No manual correlation. The agent operates across tools, not within one.

The jump from Layer 1 to Layer 2 is where platform teams become essential. Someone has to provide the governed infrastructure that lets agents connect to systems safely. I wrote about what that governance layer looks like. And what happens without it. In my MCP governance piece last week. The gap is real: 81% of organizations are deploying agents, but only 14.4% have security approval.

Layer 3. AI-Native

This is the frontier. Fewer than 5% of organizations are here. At this layer, AI agents don't assist human workflows. They run their own. Autonomous loops execute multi-step tasks: generate code, run tests, fix failures, commit, and repeat until done. Human engineers shift from writing code to writing specifications, reviewing agent output, and managing swarms of parallel agent sessions.

The Ralph Wiggum technique. The autonomous coding loop I wrote about two weeks ago. Is the most visible example. YC hackathon teams shipped six production repositories overnight for $297 in API costs. Anthropic made it an official Claude Code plugin. And Claude Code itself is now generating $2.5 billion in annual recurring revenue, with 4% of all GitHub commits coming from the tool. This isn't an experiment anymore. It's a structural shift in how software gets built.

At Layer 3, project management fundamentally changes. Tasks, sprints, and workflows become version-controlled code artifacts. What I call Project Management as Code. The platform team's mandate expands from "enable developers" to "enable and govern AI agents."

This is the AI-native stack. And it requires entirely new infrastructure.

The New Primitives

If you're a platform engineer, your mental model for infrastructure is probably containers, orchestration, CI/CD, observability, and an internal developer platform. The AI-native stack doesn't replace these. It adds new primitives on top.

MCP Servers. The Integration Layer

Model Context Protocol is becoming the connective tissue between AI agents and enterprise systems. Think of MCP servers the way you think of API endpoints, but designed for agent consumption rather than human-written code. An MCP server describes what it can do, what inputs it expects, and what it returns. Enabling agents to discover and use tools autonomously at runtime.

Your internal developer platform should expose capabilities as MCP servers: deployment tools, monitoring dashboards, incident response playbooks, infrastructure provisioning. When you do, every AI agent in your organization gains access to those capabilities through a standardized protocol.

This is happening faster than most people realize. Major platform vendors are shipping MCP servers to general availability. AI agents can now read, search, and write directly inside project management tools, CRMs, and collaboration platforms. One-third of agent operations in these integrations are writes, not just reads. Agents are doing real work inside the tools your teams already use. Salesforce, ServiceNow, Workday. The ecosystem is forming fast. The question isn't whether your toolchain will support MCP. It's whether your platform team is building the governed infrastructure to manage it when it does.

Agent Orchestration. The Execution Layer

As agents move from assistants to autonomous workers, you need orchestration. Managing multiple agent sessions running in parallel. Routing tasks to the right agent based on capability and context. Handling failures and retries. Tracking state across long-running agent workflows.

The patterns here are emerging rapidly. Vercel Labs released a Ralph loop agent implementation. LangChain provides agent orchestration frameworks. But for most enterprises, the orchestration layer will be something the platform team builds on top of these primitives. Tailored to your specific workflows, security requirements, and toolchain.

Context Management. The Memory Layer

AI agents are only as good as the context they receive. The most advanced teams are building context management systems that provide agents with project specifications, codebase maps, documentation, and institutional knowledge. Assembled dynamically based on the task at hand.

This is where the distinction between "AI-assisted" and "AI-native" becomes stark. An AI-assisted developer pastes code into a chat window. An AI-native organization has infrastructure that automatically provides agents with the right context for every task.

The Governance Plane. The Control Layer

This is the primitive most organizations skip. And the one that matters most.

Every MCP server you deploy, every agent you authorize, every autonomous loop you run creates an AI action that touches your systems. Without governance, you have no visibility into what agents are doing, no control over what they can access, no audit trail for compliance, and no ability to respond when something goes wrong.

I wrote about this extensively last week. The MCP governance gap is the most urgent infrastructure problem in enterprise AI right now. AI agents outnumber human users 82 to 1 in enterprise environments. Only 21% of organizations maintain a real-time inventory of their agents. NIST launched a federal initiative in February to create standards for agent identity and authorization. The EU AI Act enforces in five months.

Gartner forecast that 80% of large software engineering organizations would establish platform engineering teams by 2026. We're here now. Nearly 90% already operate internal developer platforms. But almost none have extended their platform to govern AI agents. That's the gap. And it's the gap that defines whether you're truly AI-native or just AI-assisted with more tools.

What the Leading Organizations Are Building

The companies furthest along the AI-native journey share common patterns.

Internal MCP Catalogs

A curated, searchable registry of approved MCP servers. The AI equivalent of an internal API catalog. Engineers discover what agent capabilities are available, which are production-certified, and what governance policies apply. Vendors are building their own MCP galleries. But the enterprises leading here build their own internal catalogs. Covering internal APIs and tools that no vendor gallery will ever include.

Agent Sandboxes

Governed environments where autonomous agent loops can run safely. File system isolation, network controls, time and cost limits, and comprehensive logging. The infrastructure that makes techniques like the Ralph Wiggum loop safe for enterprise use. Without sandboxes, your developers are running autonomous agents on their personal machines with --dangerously-skip-permissions and full access to everything their workstation can reach.

Spec-Driven Development Pipelines

Instead of writing code directly, senior engineers write specifications. PRDs, acceptance criteria, test definitions. That agents execute against. The pipeline includes automated validation, human review gates, and progressive deployment. The engineers who adapt to this model produce dramatically more. The ones who don't are still writing code one function at a time while autonomous loops ship entire repositories overnight.

Unified Observability

A single pane of glass that shows both human and agent activity across the development lifecycle. Which agents are running. What they're accessing. How much they're spending. Whether they're succeeding or looping. Microsoft's Cyber Pulse AI Security Report found 29% of employees using unsanctioned AI agents. You can't observe what you don't know exists. Which is why the visibility layer has to come first.

Governance-as-Code

AI governance policies defined declaratively and deployed through the same CI/CD pipelines as application code. Which agents can access which tools. What approval is required for which actions. How long autonomous loops can run before human review. The organizations doing this well aren't slower. They're faster, because teams trust the governed infrastructure enough to adopt it broadly.

The Platform Team's Expanding Mandate

A year ago, platform engineering was about golden paths, self-service portals, and developer experience. That work continues. But a new layer is forming on top.

Your platform team is becoming the AI infrastructure team. The questions you're answering are evolving from "how do developers deploy?" to "how do agents deploy?" From "what's our developer experience?" to "what's our agent experience?" From "how do we govern access to production?" to "how do we govern what AI agents can do in production?"

I keep seeing this play out the same way. The organizations that build this infrastructure now. The MCP layer, the governance plane, the agent orchestration. Have a compounding advantage. Every new AI capability builds on the foundation. Every new agent connects to the existing infrastructure. The investment compounds.

The ones that wait will find themselves where most are today: individual developers using AI tools in isolation, with no visibility, no governance, and no path to scale.

Getting Started: The First 90 Days

If you're a platform engineering leader and this resonates, here's where to start.

Inventory every AI tool in use

Audit which systems those tools can access. Map the MCP connections on developer workstations. You'll find connections nobody in security approved. You need to understand where you are before you can decide where to go.

Stand up your first governed MCP servers

Start with read-only observability tools. Deploy an MCP gateway for centralized auth and audit logging. Establish the infrastructure pattern that everything else will build on. This is minimum viable AI-native. And most organizations haven't done even this.

Add action-capable MCP servers for high-value workflows

Pilot agent orchestration with a willing team. Define your governance-as-code policies. Build the 12-month roadmap based on what you've learned.

The AI-native stack isn't a product you buy. It's an architectural decision you make. And the window for making it thoughtfully, rather than reactively, is closing fast.

Paul Goldman is the CEO of iTmethods, where his team helps enterprises build and govern AI-native developer platforms. From MCP infrastructure to agent orchestration and compliance. This is the first article in "The New Stack" series on building AI-native organizations.

Previously: Ralph Wiggum Is Running in Your Organization · MCP Is Exploding. Your Governance Isn't Ready.

Next: Self-Hosted AI Agents Are Here. The Governance Isn’t.

Continue the AI Governance series

Or share your thoughts here

Your comment will appear on this page. The best insights may be shared in the LinkedIn discussion.

Get Paul’s next article before it publishes

Join 500+ security leaders